Clustering Basics

Clustering is the use of multiple computers and redundant interconnections to form what appears to be a single, highly available system. A cluster provides protection against downtime for important applications or services that need to be always available by distributing the workload among several computers in such a way that, in the event of a failure in one system, the service will be available on another.

The basic concept of a cluster is easy to understand; a cluster is two or more systems working in concert to achieve a common goal. Under Windows, two main types of clustering exist: scale-out/availability clusters known as Network Load Balancing (NLB) clusters, and strictly availability-based clusters known as failover clusters. Microsoft also has a variation of Windows called Windows Compute Cluster Server.

When a computer unexpectedly falls or is intentionally taken down, clustering ensures that the processes and services being run switch to another machine, or "failover," in the cluster. This happens without interruption or the need for immediate admin intervention providing a high availability solution, which means that critical data is available at all times.

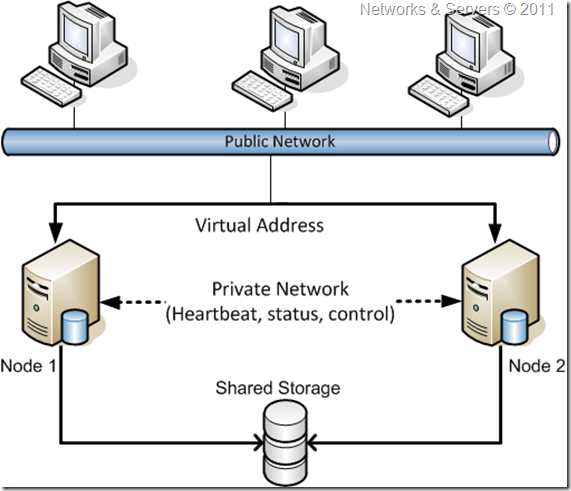

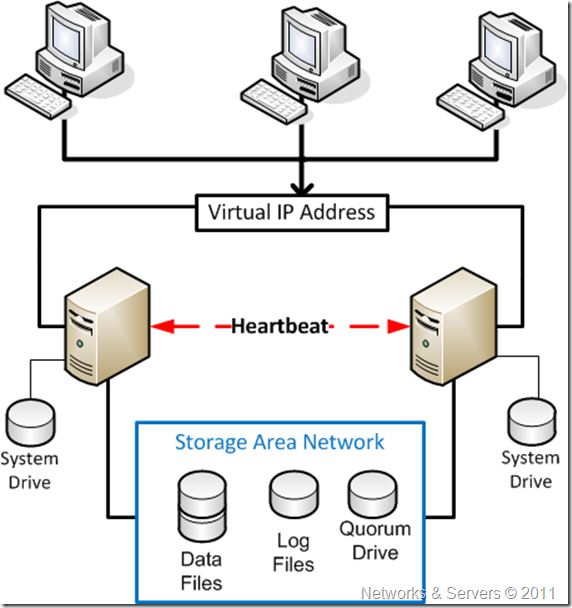

Failover clusters are typically made of two servers (or occasionally several servers) such as the configuration shown in the figure. One server (primary) is actively processing client requests and providing the services in normal situations, while the other server (failover) is monitoring the main server to take over and run the services if the event of a failure.

The primary system is monitored, with active checks every few seconds to ensure that the primary system is operating correctly. When the cluster consists only of two servers, the monitoring can happen on a dedicated cable that interconnects the two machines, or on the network. The system performing the monitoring may be either the failover computer or an independent system (called the cluster controller).

From a client point of view, the application is accessible via a DNS name which in turn maps to a virtual IP address that can float from a machine to another, depending on which machine is active. In the event of the active system failing, or failure of components associated with this system such as network hardware, the monitoring system will detect the failure and the failover system will take over operation of the service.

A key element of the failover clustering solutions is that both computers share a common file system. One approach is to provide this by using a dual ported RAID (Redundant Array of Independent Disks), so that the disk subsystem is not dependent on any single disk drive. An alternative approach is to utilize a SAN (Storage Area Network).

A Failover cluster provides:

- High availability by reducing unplanned downtime and increasing the reliability of services and applications;

- High scalability by allowing administrators to assign up to 16 nodes to one cluster enhancing performance and availability meaning it makes the system more scalable because it allows for incremental growth.

Comparing Clusters

As we have already seen in the previous posts, NLB clusters are primarily meant to provide high availability to services that rely on the TCP/IP protocol working as a load balancer, distributing the load as evenly as possible among multiple computers, each running their own independent, isolated copies of an application, such as IIS. An NLB cluster adds availability as well as scalability to web servers or FTP servers and being a non-Windows concept, load balancing can be achieved via hardware load balancers.

A Windows feature–based NLB implementation is one where multiple servers (up to 32) run independently of one another and do not share any resources thus client requests connect to the farm of servers and can be sent to any of them since they all provide the same functionality. The algorithms behind NLB keep track of which servers are busy, so when a request comes in, it is sent to a server that can handle it. In the event of an individual server failure, NLB knows about the problem and can be configured to automatically redirect the connection to another server in the NLB cluster. NLB cannot be configured on servers that are participating in a failover cluster, so it can only be used with standalone servers.

Failover clusters are probably the most common type of clusters consisting of servers that can handle and trade workloads for stateful applications (the ones that have long-running in-memory state or frequently updated data) such as e-mail, database, file, print and virtualization services across multiple servers.

A Windows failover cluster’s purpose is to help maintain client access to applications and server resources even if the event of some sort of outage (natural disaster, software failure, server failure, etc.). The general idea of availability behind a failover cluster implementation is that client machines and applications do not need to worry about which server in the cluster is actively running a given resource; the name and IP address of whatever is running within the failover cluster is virtualized. This means the application or client connects to a single name or IP address, but behind the scenes, the resource that is being accessed can be running on any server that is part of the cluster.

Trying to compare NLB with Failover Clustering we could say that clustering is the use of multiple computers to provide a single service while Load Balancing is a technique to use multiple computers in a cluster, i.e., a Cluster is an object(s) and Load Balancing is a method.

So, let’s try to compare these two clusters types in Windows Server 2008:

Feature

|

NLB

|

Failover Clustering

|

| Primary usage | Scaling-out workloads, provide some fault tolerance for stateless applications | Increased reliability of software, services and network connections |

| Common workloads | IIS, ISA Server | E-mail, databases, file services, print services, virtualization |

| Failover transparency | Possibly some interruption for clients | In most cases, completely seamless for clients |

| Supported nodes | 32 | 16 |

Cluster Terminology

Failover Cluster

A cluster is group of independent computers, also known as nodes, that are linked together to provide highly available resources for a network. Each node that is a member of the cluster has both its own individual disk storage and access to a common disk subsystem. When one node in the cluster fails, the remaining node or nodes assume responsibility for the resources that the failed node was running. This allows the users to continue to access those resources while the failed node is out of operation.

Node

A server in the failover cluster is known as a node so these are simply the servers which are members of the cluster.

Geocluster

Clusters can be deployed in a server farm in a single physical facility or in different facilities geographically separated for added resiliency. The latter type of cluster is often referred to as a geocluster. Geoclusters became very popular as a tool to implement business continuance because they improve the time that it takes for an application to be brought online after the servers in the primary site become unavailable meaning that ultimately they improve the recovery time objective (RTO).

Cluster networks

The nodes in a cluster communicate over a public and a private network. The public network is used to receive client requests, while the private network is mainly used for monitoring. The nodes monitor the health of each other by exchanging heartbeats on the private network and if this network becomes unavailable, they can use the public network. There can be more than one private network connection for redundancy but most deployments simply use a different VLAN for the private network connection. Alternatively, it is also possible to use a single LAN interface for both public and private connectivity, but this is not recommended for redundancy reasons.

Logical host

The term Logical Host or Cluster Logical Host is used to describe the network address which is used to access services provided by the cluster. This logical host identity is not tied to a single cluster node as it is actually a network address/hostname that is linked with the service(s) provided by the cluster. If a cluster node with a running database goes down, the database will be restarted on another cluster node, and the network address that the users use to access it will point them to the new node so that users can access the database again.

Failover

Failover occurs when a clustered resource fails on one server and another server takes over the management of the resource.

Failback

When the server which dropped out of the cluster returns to service and rejoins the cluster, the services or applications which previously failed over to another node can now return to the server on which they originally ran. This is called failback.

Quorum

The quorum for a cluster can be seen as just the number of nodes that must be online for that cluster to continue running. But there is more to it; for example if all the communication between the nodes in the cluster is lost, both nodes will try to bring the same group online, which results in an active-active scenario. Incoming requests go to both nodes, which then try to write to the shared disk, thus causing data corruption. This is commonly referred to as the split-brain problem.

The mechanism that protects against this problem is the quorum and only one node in the cluster owns the quorum at any given time. The key concept is that when all communication is lost, the node that owns the quorum is the one that can bring resources online, while if partial communication still exists, the node that owns the quorum is the one that can initiate the move of an application group.

Quorum implementation in Windows Server

When a failover cluster is brought online (assuming one node at a time), the first disk brought online is one that will be associated the quorum model deployed. To do this, the failover cluster executes a disk arbitration algorithm to take ownership of that disk on the first node initially making it as offline and then going through a few checks. When the cluster is satisfied that there are no problems with the quorum, it is brought online. The same thing happens with the other disks. After all the disks come online, the Cluster Disk Driver sends periodic reservations every 3 seconds to keep ownership of the disk.

If for some reason the cluster loses communication over all of its networks, the quorum arbitration process begins. The outcome is straightforward: the node that currently owns the reservation on the quorum is the defending node and the other nodes become challengers. When a challenger detects that it cannot communicate, it issues a request to break any existing reservations it owns via a buswide SCSI reset in Windows Server 2003 and persistent reservation in Windows Server 2008. Seven seconds after this reset happens, the challenger attempts to gain control of the quorum and then a few things can happen:

- If the node that already owns the quorum is up and running, it still has the reservation of the quorum disk thus the challenger cannot take ownership and it shuts down the Cluster Service;

- If the node that owns the quorum fails and gives up its reservation, then the challenger can take ownership after 10 seconds elapse. The challenger can reserve the quorum, bring it online, and subsequently take ownership of other resources in the cluster;

- If no node of the cluster can gain ownership of the quorum, the Cluster Service is stopped on all nodes.

Applications

An application running on a server that has clustering software installed does not mean that the application is going to benefit from the clustering. Unless an application is cluster-aware, an application process crashing does not necessarily cause a failover to the process running on the redundant machine. For this to happen you need an application that is cluster-aware and each vendor of cluster software provides immediate support for certain applications.

Resources

Resources are the applications services, or other elements under the control of the Cluster Service. A clustered application has individual resources, such as an IP address, a physical disk, and a network name.

Resource Monitor

Resource monitors check their assigned resources and notify the Cluster Service if there is any change in the resource state.

Resource group

One key concept with clusters is the group. This term refers to a set of dependent resources that are grouped together. Individual resources are contained in a cluster resource group, which is similar to a folder a hard drive that contains files. The group is a unit of failover; in other words, it is the bundling of all the resources that constitute an application, including its IP address, its name, the disks, and so on. A resource group is the smallest unit of failover, individual resources cannot failover. That is, all elements that belong to a single resource group have to exist on a single node.

One example of the grouping of resources could be a “shared folder” application, its IP address, the disk that this application uses, and the network name. If any one of these resources is not available, for example if the disk is not reachable by this server, the group fails over to the redundant machine. The failover of a group from one machine to another one can be automatic, when a key resource in the group fails, or manual.

Dependencies

Some resources need other resources to run successfully, they are a dependency of another resource (or resources) within a group. If a resource is dependent upon another, it will not be able to start until the top-level resource is online. For example, a file share needs a physical disk to hold the data which will be accessed using the share or, in a clustered SQL Server implementation, SQL Server Agent is dependent upon SQL Server to start before it can come online. If SQL Server cannot be started, SQL Server Agent will not be brought online either.

These relationships are known as resource dependencies. When one resource is defined as a dependency for another resource, both resources must be placed in the same group. If a number of resources are ultimately dependent on one resource (for example, one physical disk resource), all of those resources must belong to the same group. Dependencies are used to define how different resources relate to one another. These interdependencies control the sequence in which the Cluster Service brings resources online and takes them offline.

Resource states

Resources can exist in one of five states:

- Offline: the resource is not available for use by any other resource or client;

- Offline Pending: this is a transitional state while the resource is being taken offline;

- Online: the resource is available;

- Online Pending: this is a transitional state while the resource is being brought online;

- Failed: there is a problem with the resource that the Cluster Service cannot resolve.

One can specify the amount of time that Cluster Service allows for specific resources to go online or offline. If the resource cannot be brought online or offline within this time, the resource is placed in the failed state.

Witness

A witness element, either a disk witness or a file share witness, is used only in some clusters, usually the one with an even number of nodes, acting as a tiebreaker when there are failures and the remaining nodes must determine whether enough nodes are currently running to work together as a cluster.

251 comments:

1 – 200 of 251 Newer› Newest»Great blog post. The way you are explaining is very clear.

Great post and I love the way you have described everything about this concept. inMotion Review

I’m happy I located this blog! From time to time, students want to cognitive the keys of productive literary essays composing. Your first-class knowledge about this good post can become a proper basis for such people. nice onedata science malaysia

This website is remarkable information and facts it's really excellentdata science malaysia

This is a great post I saw thanks to sharing. I really want to hope that you will continue to share great posts in the future.

https://360digitmg.com/india/data-science-using-python-and-r-programming-in-delhi

I’m amazed, I must say. Seldom do I come across a blog that’s both equally educative and interesting, and let me tell you, you've hit the nail on the head.

Best Monitor 24"

The problem is an issue that too few folks are speaking intelligently about. I'm very happy that I found this during my search for something regarding this.

Best Monitor Guide

Best DJ

DJ Store

Gift Card Generator

Gift Card Generator

Gift Card Generator

Instagram Followers generator

Instagram Followers Generator

Gift Card Generator

Software IT Coaching Center in Chennai | Drilling consultants

Your content is very unique and understandable useful for the readers keep update more article like this.

business analytics courses in aurangabad

wow, it's a nice article and gets the most useful information from this website and I had bookmarked this website. www.matka143.in for the future access. because after reading this article I got very useful information from your website and I hope you will Satta Matka keep writing this type of content.

This all seems to perpetuate the myth that Vader was evil. In fact, Vader was a hard man but those were hard times and he had to keep the Empire together. The real problem was the Jedi Knights who were blocking progress and military reform. They had to go.. satta matka

hey.

I had just read your content and find very informative knowledge from this article.

we have a game website where you can get the game of real money winning which name is Satta Matka you will find the amazing interface to play all the game.

--------------------------------------------------------------------

I was born forty-nine years ago, less a day. I was born a slave, as billions are born slaves. When I was a child I did not immediately imagine that I deserved freedom, for this was not my mother's attitude. Suffering was to be endured. She admitted a patient hope for less cruel masters, when we were between them. She taught that if freedom was in our destinies, fate would find us. satta matka

The Way you are explaning is fantastic and I impressed while reading this article Please keep writing this king of amazing content satta Matka

This is a good tip especially for those new to the blogosphere. Brief but very precise info… Appreciate your sharing this one. A must-read post!

Buy 100 Instagram Views

Quality Content writing is main issue for traffic generate. Thanks for sharing satta matka

Thanks for Sharing This Article.It is very so much valuable content. I hope these Commenting lists will help to my website Satta Matkan https://matka143.in

Thank you for sharing it. It is very helpful.

Nehru Quotes

thankyou sir for sharing this valuable content.

i have a furniture website you can visit on that website to buy the home furniture in Kenya

thankyou sir for sharing this valuable content.

Satta Matka is the Most Popular game in Western Mumbai.

also South. The Game is to Provide a Fixed Number of Client. but must be important who's your broker. satta matka result

thankyou sir for sharing this valuable content.

I love your content it's very unique.

Online Google Ads Course

SEO is one of the main techniques of digital marketing. There are many open spaces in this area and with the growing pattern, it is likely to continue in this internet age as well.

seo course in noida

Your articles are to a great degree bewildering and I got a huge amount of information and headingsatta matka

understanding them Awesome Blog! you'd an uncommon action in your article. The substance is excessively awesome.

i have a furniture website you can visit on that website to buy the home furniture in Kenya

Any service or product that sounds to good to be true usually is, as much as people do not want to believe this when you are confronted with what seems to be a great offer its sadly the nature of business. Satta Matka | Indian Matka | Kalyan Matka | Madhur Matka | Kapil Matka | Dpboss | Fix Matka

thankyou sir for sharing this valuable content.

I love your content it's very unique.

DigiDaddy World

I like your post. Everyone should do read this blog. Because this blog is important for all now I will share this post. Thank you so much for share with us. Type Calendar

Guru ji home tutorial best class in delhi and chandigarh . we proviode best teacher and best learning processs .

home tutor in delhi

This is most informative and also this post most user friendly and super navigation to all posts... Thank you so much for giving this information to me..

machine learning course aurangabad

Exactly what i was looking for, pretty much explained everything here and thank you for such an awesome article. I provide solar system in Pakistan, and i thought you might want to read more about it. Feel free to check my solar blog.

Thanks for the information about Blogspot very informative for everyone

artificial intelligence course

This is my first time on your blog and i am your fan now. Thanks for sharing such a great article, keep it up.. 🙂

Hey, Your article is just amazing, It was just i was looking for. Thanks for sharing lovely article.

E-commerce website development in india

Digital Marketing Company In India

Seo Company In Varanasi

Website design company in varanasi

cheap website design company bangalore

health and beauty products manufacturer

Bed net canopy manufacturer

Broken Hair Finishing Rod Manufacturer

Eyebrow Pencil Manufacturer

Health And Beauty Products Manufacturer

Black satta king

Is A Reliable And Trusted Website To Check Satta King Daily Results Online. Here You Will Get Info of Daily Lucky Numbers That Declared As Winning Numbers. Here You Can Also Check The Results of Popular Satta Companies Like Desawar, Taj, Faridabad, Ghaziabad, And Gali. When These Companies Declare Their outcomes, We Will Update Them Here. This Website Is A Best Source To Get Black satta king

Results. To Get Fast Results Just Bookmark This Website And Visit It Time To Time. We, Will, Update Every Company Results ASAP. Thus, You Can Check Your Results Without Any Delay And Can Claim Your Money Fast. In this way, If You Are Looking For Satta King Results Online, Then You Have No Need To Go Anywhere

Siberian Husky Puppies For Sale Near Me

Siberian Husky Puppies

Siberian Husky Puppies For Sale Near Me

Siberian Husky Puppies For Sale Near Me

This is an very informative post, looking for more. please use fyndhere to find nearby stores on fyndhere ios and andriod app

You’ve made some decent points there. I checked on the internet for more info about the issue and found most individuals will go along with your views on this website. data analytics course in delhi

Thanks for a nice post. I really love it. It's so good and so awesome. Satta Matka or simply Matka is Indian game. Matkaoffice.net is world's fastest matka official website. Welcome to top matka world SATTA MATKAsatta matka.

Thanks for this post TechESam

I am looking for thisinformative post thanks for share it

How do I fix Yahoo mail not working on Mac?

Sometimes pernicious software can enter into your system when you download any software unintentionally. Such malware can stop the working of apps like your Browser and Mac Mail. In such a case, you need to scan your Mac with an excellent antivirus to confirm the suspicion to fix the Yahoo mail not working on Mac issue.

Also Read:-

yahoo mail not syncing

how do i talk to a person at verizon customer service

how do i speak to a live person at google

my facebook is not working

I’m impressed, I must say. Seldom do I come across a blog that’s both educative and interesting, and without a doubt, you have hit the nail on the head. The problem is an issue that not enough folks are speaking intelligently about. Now i'm very happy I stumbled across this during my hunt for something regarding this.leather harley jackets

MSBI Online training with 100% job Assistance and 24 X 7 Online Support. Visit us

about online mulesoft training | mulesoft online course

Contact Information:

USA: +1 7327039066

INDIA: +91 8885448788 , 9550102466

Email: info@onlineitguru.com

Full stack Training in kolkata

very nice information regarding the application.

Full Stack Classes in Kolkata

This could be applicable to AWS Disaster Recovery

https://novatec.co.in/aws-training

Everything is very open with a very clear clarification of the issues. It was definitely informative. Your site is very helpful. Thanks for sharing!

ducati leather jacket

It is so nice article thank you for sharing this valuable content.

workday training

workday online training

workday course

Hello there! I just want to offer you a big thumbs up for your great info you have right here on this post. I'll be coming back to your web site for more soon.

soccar uniform

Aw, this was an exceptionally nice post. Taking a few minutes and actual effort to make a superb article… but what can I say… I put things off a lot and don't manage to get nearly anything done.

granite countertops kitchen near me are more durable than other materials. Granite Pharmaca is more stringent than the usual counter options, and it can be worn for regular wear and tear. Stands up because granite is such a hard material, it is resistant to scratches. Cutting on it will damage your knives, but not your counters. quartz countertops near me is top leading company in New Jersey for Granite countertops kitchen. Find granite countertop contractors near me .

We are looking for this informative post very thankful for share. Buy Motogp Leather Suits & Motogp Leather Jackets with worldwide free shipping.

Motogp Leather Suits

Motogp Leather Jacket

Do you want to play satta king

Lottery, found this term on the internet or heard about this unique lottery from your friends.

satta king up

satta king 786

satta king online

black satta

great info

digital marketing agency

Leather fashion jacket never runs out of style and if you’re looking for best articles regarding Harley Davidson leather Jackets, you’re just at the right place.

JnJ is a registered firm that deals with all kinds of leather jackets, including motorbike racing suits, motorbike leather jackets and leather vests, leather gloves, for both men and women.

Totally loved your article. Looking forward to see more more from you.

black satta

TODAY EARNING VERY EASY WITH PLAY BAZAAR

PLAY BAZAAR CLICK NOW

https://playbazaar.ind.in

PLAY BAZAAR BEST SATTA RESULT SITE

Play Bazaar

Play Bazaar Result

Play Bazaar

Play Bazaar CHART

Play Bazaar 2022

Play Bazaar Leak Number

Play Bazaar Record

PlayBazaar.ind.in

https://playbazaar.ind.in

Play Bazar

Play Bajar

TODAY EARNING VERY EASY WITH PLAY BAZAAR

PLAY BAZAAR CLICK NOW

https://playbazaar.ind.in

TODAY EARNING VERY EASY WITH PLAY BAZAAR

PLAY BAZAAR CLICK NOW

https://playbazaar.ind.in

TODAY EARNING VERY EASY WITH PLAY BAZAAR

PLAY BAZAAR CLICK NOW

https://playbazaar.ind.in

TODAY EARNING VERY EASY WITH PLAY BAZAAR

PLAY BAZAAR CLICK NOW

https://playbazaar.ind.in

https://playbazaar.ind.in

Earn Money Daily

CLICK HERE NOW

https://playbazaar.ind.in

Earn Money Daily

CLICK HERE NOW

PLAY BAZAAR BEST SATTA RESULT SITE

Play Bazaar

Play Bazaar Result

Play Bazaar

Play Bazaar CHART

Play Bazaar 2022

Play Bazaar Leak Number

Play Bazaar Record

PlayBazaar.ind.in

Play Bazaar Old Record

PlayBazaar

https://playbazaar.ind.in

Play Bazar

Play Bajar

TODAY EARNING VERY EASY WITH PLAY BAZAAR

PLAY BAZAAR CLICK NOW

https://playbazaar.ind.in

TODAY EARNING VERY EASY WITH PLAY BAZAAR

PLAY BAZAAR CLICK NOW

https://playbazaar.ind.in

TODAY EARNING VERY EASY WITH PLAY BAZAAR

PLAY BAZAAR CLICK NOW

https://playbazaar.ind.in

TODAY EARNING VERY EASY WITH PLAY BAZAAR

PLAY BAZAAR CLICK NOW

https://playbazaar.ind.in

https://playbazaar.ind.in

Earn Money Daily

CLICK HERE NOW

https://playbazaar.ind.in

Earn Money Daily

CLICK HERE NOW

Nice post. I am totally appreciate with blog. Thanks for sharing this knowledgeable post.

Satta King

Sattaking

Delhi Satta

Satta

Satta Matka

Delhi Satta King

Satta Matka Online

Satta Agency

All India Satta Bazar

Hello Friends,

Thank you for sharing cluster terminology. It is very nice articles.

Best hair spray

This is blog sharing hair straightening spray . Please visit above blog and get more information.

buy-tahoe-og-online

buy-gushers-online

buy-pineapple-cake-online

buy-golden-lemon-online

buy-sherb-cake-online

buy-sour-kush-online

buy-gorilla-glue-online

buy-gmo-online

Amazing Post, I Like your post if you want to aware of the stock market Get 90% accurate Share Market Tips| Indian Stock Market Tips | MCX Trading , F&O , Nifty Intraday Tips for daily Profit!!! For Free trial give a Missed Call at 083 0211 0055.

Munchkins cat breeder, we stand behind our guarantee, our customers, and our kittens! We go to great efforts to ensure that our kittens are healthy and socialized with an excellent demeanor that is native to the breed of each kitten.

munchkin kittens for sale

munchkin kittens

munchkin for sale

munchkin cats for sale

munchkin cat | munchkin cat for sale | munchkin kittens for sale | munchkin cats for sale | munchkin cat breeders | munchkin kittens for sale near me | munchkin kitten for sale | munchkin cats | munchkin kittens for sale near me | munchkin kittens | buy munchkin cats | munchkin kitten for sale | munchkin cat for sale | standard munchkin kitten for sale | dwarf cats for sale | cat breeders near me | munchkin breeders near me | white munchkin cats | dwarf cats | short legged munchkin kittens for sale | short legged kittens for sale

savannah cat price

savannah cats for sale

savannah kittens

pug puppies for sale

savannah cat for sale

Serval kitten for sale,

savannah kittens for sale, serval cat for sale, savannah cat for sale, savannah cats for sale, f1 savannah cat for sale, serval cat for sale, exotic cats for sale, f1 savannah cat, f4 savannah cat, cats for sale, savannah cats, Serval kittens for sale, savannah kittens for sale near me, savannah cats for sale near me, bengal kittens for sale, bengal kittens for sale near me.

Your site is very informative and it has helped me a lot And what promotes my business Visit Our Site:-

Play Bazaar

Thanks for sharing, this is really helpful.

Power Bi Consulting Services

Power Bi Corporate Training

Tableau Corporate Training

Thanks for educating us on Failover clusters, though its seems new because I am just hearing this for the first time. I glad that I stop by on this particular page and post, coming around on next post for more info. Thanks for sharing your piece. Click here for more info on blog. easyinfoblog.com

Thank you for sharing wonderful information with us to get some idea about it.

pega testing course

pega testing online course

Today's special golden tips will get moneyindian matka

It's impressive, I have to say. Rarely do I come across a blog that is both educational and interesting, and you have definitely hit the nail on the head. There is a problem in that not enough people are speaking intelligently about it. I'm glad I stumbled upon this during my search for something about it.

digital outdoor advertisement

Thanks for educating us about Failover Clusters, even though I am new to this field as this is the first time I've heard of it. It's great to have stopped by on this particular page and post, and I'll be back to read more on the next post. thank you to share it. Click here for Best Hair Care Products

Your work is very good and I appreciate you and hopping for some more informative posts

<a href="https://360digitmg.com/course/certification-program-in-data-sciencedata science training</a>

play the best indian Satta king games and win big amount reword.

This post is very simple to read and appreciate without leaving any details out. Great work!

data scientist training in malaysia

Most students are looking for WAEC Expo/runs in order to pass the WAEC Exams. But what they actually need is WAEC Past Questions and answers. This is the best expo you need and it will gaurantee an outstanding WAEC Result.

IntelliMindz is the best IT Training in Bangalore with placement, offering 200 and more software courses with 100% Placement Assistance.

SAP HANA Online Training

SAP HANA Training in Bangalore

SAP HANA Training in Chennai

SAP HANA Training in Coimbatore

SAP FICO Online Training

SAP FICO Training in Bangalore

SAP FICO Training in Chennai

SAP FICO Training in Coimbatore

شركة عزل اسطح بالاحساء

شركة عزل اسطح بالقطيف

شركة عزل اسطح بالجبيل

شركة عزل اسطح بالدمام

azure networking

arm templates

azure notification hub

azure bastion host

app power bi

kubernetes dashboard

terraform cheat sheet

I am looking for this informative post thanks for share it. We have an online store for liposuction Instruments. We are offering worldwide free shipping on every order.

Johnnie Lok

Syringe Snap Lock

Syringe Tip Caps

Toomey To Luer Lock Cannula

Autoclave Tray

This is an excellent post I seen thanks to share it. It is really what I wanted to see hope in future you will continue for sharing such a excellent post.

data science course

We are looking for the informative post it is very helpful thanks for share it. We are offering all type of leather jackets with worldwide free shipping.

LEATHER BOMBER JACKET

SHEARLING JACKETS

SHEEPSKIN JACKET

SUPERHERO LEATHER JACKET

aws inspector

openshift vs kubernetes

azure data lake

arm templates

azure traffic manager

azure bastion

azure synapse analytics

That is nice article from you , this is informative stuff . Hope more articles from you . I also want to share some information about Pet Dermatology in Vizag

Thanks for sharing valuable information and knowledge.It is very useful.

Sattaking

Sattaking

Sattakingresults

Sattakingcloud

Sattaking

Sattaking

sattaking

Sattaking

Very interesting blog good information.

Astrologer in Bangalore

Famous Astrologer in Bangalore

Cancer rehabilitation center hyderabad

best rehabilitation centre in hyderabad

Nice post. Thanks for sharing such a piece of worthy information.

Check out Memory Foam Mattress Beds this

Beds for dogs India this

Thank you for this content, I am more familiar with servers because of you

Latest Nigerian Entertainment News Today

Very awesome post! I like that and very interesting content.

workday studio online training india

workday studio training india

I read your blog that was very informative, With this blog I can bring a lot of changes in my future MotoGP Leather Suit & Jackets For Sale

MotoGP Leather Jackets For Sale

MotoGP Leather Suit For Sale

Motorcycle Leather Jackets For Sale

Harley Davidson Jackets For Sale

Mesh Motorcycle Jackets For Sale

Motorcycle Leather Jacket For Sale

What an amazing post, all those cute houses. I am doing twelve craft days of Christmas on my blog so I was looking for some papercut houses, I love that. And now I found your blog with this incredible list of houses .

Here My website for WEB DEVELOPMENT Company in Varanasi

Wow! Superb blog. I loved it. Thank you so much for sharing, I am going to share this blog with my friends.

Sattaking

Sattaking

Sattaking

Sattaking

Sattaking

Sattaking

sattaking

Sattaking

Thanks for sharing the article I always appreciate your topic. Python Training In Jodhpur

leather fashion jacket

Fashion Leather Jacket

nice leather fashion jacket

Fashion Leather Jacket

Your post really cool and interesting. Thanks very much.

Fashion Leather Jacket

Such a very useful blog. Very Interesting to read this blog. Thanks for proving such a wonderful content.

Cyber Insurance in india

growing up naturally is an good option Mobilemall Bangladesh

Get Ajord is an online exclusive store selling high quality traditional wet shaving products and Shaving Gifts. We are proud to offer a wide selection of the most beautiful, handcrafted Shaving razors, Best shaving brush, shaving sets, Shaving Kits for men and other grooming accessories.

you're blog is really good,when i read this blog it's give me some important info

pls visit at once: Madhur Day

Thanks for sharing this awesome post it was very informative Plz share more post like this

WOMEN LEATHER BOMBER JACKETS

Womens Leather Vest

Men Leather Vest

Your Blog content is really good. thanx for share the great info we are the best indian Madhur Day matka website

Such an interesting article here.I was searching for something like that for quite a long time and at last I have found it here. sherlock coat

your content is very inspiring and appriciating I really like it please visit my site for Satta King Result also check Satta king 24X7 and also check sattaking and for quick result check my site Satta matka and for super fast result check Satta king

very nice article , thank you for the post evryone is looking for this type article brisk logic best web development agency best web development agency

Are You Looking a Best Vaastu Specialist Delhi-NCR And Top Consultant in India Here is The Best Vaastu Expert in India Acharya Chhaya Goyal is the Best Vaastu Consultant in India and leads the team of Vaastu Devayah Namah of Best Vaastu Experts & consultants in Delhi-NCR.She is a Vastu shastra specialist.

Vastu Consultant India

Nice Post Your content is very inspiring and appriciating I really like it please visit my site for

kolkata fatafat and kolkata live result or for finding old result check kolkata fatafat old result and you can also checkout your lucky number on kolkata fatafat lucky number you can also visit our site for some tips and tricks kolkata fatafat tips & tricks visit us on kolkata fatafat result

cool stuff you have and you keep overhaul every one of us

data scientist course in malaysia

Awesome Blog.. its Historical Jackets For Sale

Hussar Dolman Jackets For Sale

Hussar Military Jackets For Sale

Such a very useful blog. Very Interesting to read this blog. Thanks for proving such a wonderful content.

Satta King Disawar

Satta King Gali

Great Article. Keep Up The Good Work. Love to read such Great Content which provides quality Knowledge as well as interesting facts. Best Astrologer In Jodhpur

Check out

Gasket Material

Gasket Sheet

Non Asbestos Gasket Sheet

Asbestos Gasket Sheet

Best Makeup Artist Academy in Delhi

Professional Makeup Academy in Delhi

Best Makeup Classes in Delhi

Officially issued by Bank of Missouri, the Fortiva Credit Card.

Atlanticus Services Corporation manages the services available on the online platform.

www.fortivacreditcard.com

Great Article. Keep Up The Good Work. Love to read such Great Content which provides quality Knowledge as well as interesting facts. Astrologer In Jodhpur

MAHARASHTRA MATKA

MAHARASHTRA MATKA

MAHARASHTRA MATKA

MAHARASHTRA MATKA

MAHARASHTRA MATKA

MAHARASHTRA MATKA

MAHARASHTRA MATKA

It is so nice blog. I was really satisfied by seeing this blog.

workday studio online training hyderabad

workday studio online training india

Informative content...

ONLEI Technologies

Internship

wow really such a nice site,am very happy for using of this site,thank you so much sharing of this type of information.

Satta is a unique game to place your bets and win only when you have found the right medium or connection.satta king You will have to find an authentic mediator to play Satta

Sattakingand place bets. How can you find out the authentic results? This is where Satta King Satta king

comes into the picture. It is an online portal that has been designed to cater to Satta results

cloudkeeda

cloudkeeda

cloudkeeda

cloudkeeda

cloudkeeda

cloudkeeda

cloudkeeda

what is azure

azure free account

Thanks for Posting such an useful & informative stuff...

Agro Fertilizer Company in India

Daraz App Download

RPWLIKER Mod Apk Download Facebook Auto Like App

Facebook Avatar creator App

to real that nice and use

full wab site content thanks for the share.

India matka 420

Backlinks

赤×ピンク (Red x Pink) Teaser Trailer (2014) HD

watch video

Hi to every one, since I am eally eager of reading this weblog's post to be updated on a regular basis.

It consists of good material. your post is really very good. when you share any post pls send me a notification on my web links:

Kalyan matka

kalyan chart

Kalyan Panel Chart

(Red x Pink) Teaser Trailer starring Yuria Haga, Asami Tada, Ayame Misaki & Rina Koike and directed by Koichi Sakamoto.Four girls take part in illegal underground fighting event "Girl's Blood" held at an abandoned school building in Roppongi every night. The girls have their own stories and quirks from their private lives. Satsuki suffers from a gender identity disorder, Chinatsu ran away from an abusive husband, Miko is a S&M queen and

thanks for sharing this information sir

satta matka result

It is in reality a nice and helpful piece of information. I’m satisfied that you

just shared this useful info with us. Please stay us up to

date like this. Thanks for sharing. Play bazaar

Thank you for reading our post carefully.

madhur bazar

Satta King I things and data online that you might not have heard before on the web.

Hi, I found your site by means of Google

indeed, even as searching for a comparative matter, your site arrived up, it is by all accounts incredible.

bhai aapke liye hai.lagao or jeeto.I have bookmarked it in my google bookmarks.

game is drawing and guisses based generally match-up,

anyway currentlyit's arranged in best, and satta lord desawar is presently horribly eminent

furthermore, to a great extent

participating in game across the globe people ar insane with respect to this game.

Yet, as of now the principal essential factor is that this game is satta king neglected to keep

the law and

decide guideline that to keep the conventions and rule. Presently right now people need to depend on it,is a online game. is a winner of Perday Game result of Satta. Satta Basicaly coming from Satta matka. in the present time satta many type

such as cricket, Kabaddi, Hocky, stock market and other sector. Satta King is a game played for centuries, which has been playing its part in destroy people's homes. Satta is totally restricted in India. Satta has been prohibit in India since 1947. The Sattebaaj who play Satta have found a new way to avoid this Now.Satta King has Totally changed in today's Now. Today this gamble is playedonline. This online Satta is played like or Satta.is in a way a new form of Matka game. The starting is diffrent for every on the off chance that the game doesn't follow the conventions they need not play the games anyway people are still partaking in the game,they play the games on the QT people have answer on it to quit participating

in this kind of games,

consistently help work and worked with individuals that might want facilitated,do something for

your country do perpetually reasonable thing and be everlastingly happy.satta king

Much obliged to you for visiting Our Website sattaking,Most most likely similar to our visitor from Google search.Maybe you are

visting here to become more acquainted with about gali satta number today.to know gali disawar ka satta number please visting

your landing page of site and look down . You will see boxed sorts data which is show satta number

of particular game. There you will likewise see number of today yesterday satta number of, for example, gali disawar, new

mumbai, disawar gold and loads of game you have wagered on the game.If you play your own gali disawar satta game and

need us to put your own board on your website.Please satta king

Rajdhani Night Matka, Disawar, Gali, Rajdhani Day Matka, Taj, Mahakali and other game 7 Star Day, Day Lucky Star, Parel Day, Parel Night etc.

very nice post! thanx for share the great post. pls click the link to get entertainment satta king

Satta King I things and data online that you might not have heard before on the web.

Hi, I found your site by means of Google

indeed, even as searching for a comparative matter, your site arrived up, it is by all accounts incredible.

bhai aapke liye hai.lagao or jeeto.I have bookmarked it in my google bookmarks.

game is drawing and guisses based generally match-up,

anyway currentlyit's arranged in best, and satta lord desawar is presently horribly eminent

furthermore, to a great extent

participating in game across the globe people ar insane with respect to this game.

Yet, as of now the principal essential factor is that this game is satta king neglected to keep

the law and

decide guideline that to keep the conventions and rule. Presently right now people need to depend on it,is a online game. is a winner of Perday Game result of Satta. Satta Basicaly coming from Satta matka. in the present time satta many type

such as cricket, Kabaddi, Hocky, stock market and other sector.Satta King is a game played for centuries, which has been playing its part in destroy people's homes. Satta is totally restricted in India. Satta has been prohibit in India since 1947. The Sattebaaj who play Satta have found a new way to avoid this Now. Satta Kinghas Totally changed in today's Now. Today this gamble is playedonline. This online Satta is played like or Satta.is in a way a new form of Matka game. The starting is diffrent for every on the off chance that the game doesn't follow the conventions they need not play the games anyway people are still partaking in the game,they play the games on the QT people have answer on it to quit participating

in this kind of games,

consistently help work and worked with individuals that might want facilitated,do something for

your country do perpetually reasonable thing and be everlastingly happy.satta king

Much obliged to you for visiting Our Website sattaking,Most most likely similar to our visitor from Google search.Maybe you are

visting here to become more acquainted with about gali satta number today.to know gali disawar ka satta number please visting

your landing page of site and look down . You will see boxed sorts data which is show satta number

of particular game. There you will likewise see number of today yesterday satta number of, for example, gali disawar, new

mumbai, disawar gold and loads of game you have wagered on the game.If you play your own gali disawar satta game and

there are many other matka games in the market like

Rajdhani Night Matka, Disawar, Gali, Rajdhani Day Matka, Taj, Mahakali and other game 7 Star Day, Day Lucky Star, Parel Day, Parel Night etc.

we are the indian online gaming play website and get fast result just click on: play bazaar

Satta KingI things and data online that you might not have heard before on the web.

Hi, I found your site by means of Google

indeed, even as searching for a comparative matter, your site arrived up, it is by all accounts incredible.

bhai aapke liye hai.lagao or jeeto.I have bookmarked it in my google bookmarks.

game is drawing and guisses based generally match-up,

anyway currentlyit's arranged in best, and satta lord desawar is presently horribly eminent

furthermore, to a great extent

participating in game across the globe people ar insane with respect to this game.

Yet, as of now the principal essential factor is that this game is satta king neglected to keep

the law and

decide guideline that to keep the conventions and rule. Presently right now people need to depend on it,is a online game. is a winner of Perday Game result of Satta. Satta Basicaly coming from Satta matka. in the present time satta many type

such as cricket, Kabaddi, Hocky, stock market and other sector. Satta Kingis a game played for centuries, which has been playing its part in destroy people's homes. Satta is totally restricted in India. Satta has been prohibit in India since 1947. The Sattebaaj who play Satta have found a new way to avoid this Now. Satta King has Totally changed in today's Now. Today this gamble is playedonline. This online Satta is played like or Satta.is in a way a new form of Matka game. The starting is diffrent for every on the off chance that the game doesn't follow the conventions they need not play the games anyway people are still partaking in the game,they play the games on the QT people have answer on it to quit participating

in this kind of games,

consistently help work and worked with individuals that might want facilitated,do something for

your country do perpetually reasonable thing and be everlastingly happy.satta king

Much obliged to you for visiting Our Website sattaking,Most most likely similar to our visitor from Google search.Maybe you are

visting here to become more acquainted with about gali satta number today.to know gali disawar ka satta number please visting

Rajdhani Night Matka, Disawar, Gali, Rajdhani Day Matka, Taj, Mahakali and other game 7 Star Day, Day Lucky Star, Parel Day, Parel Night etc.

A stop error or a blue screen error code can occur if any issue causes your device to shut down. It usually happens during upgrade process. Windows 10 blue screen error codes can be resolved through some simple steps.

get the online matka gaming number just you need to click on a click for leak number sattamatka

This blog is awesome. I find this blog to be very interesting and very resourceful.

satta king

I things and data online that you might not have heard before on the web.

kalyan chart

Wow! What a useful nugget of information. I luckily stumbled on your weblog and to be frank I'm pleased with what you've got here.

french bulldog for sale

how much do french bulldogs cost

cheap french bulldog puppies under 500

French Bulldog Puppies

adopt a french bulldog puppy

kalyan chart

kalyan chart

kalyan chart

kalyan chart

kalyan chart

kalyan chart

Satta King is a online game. Satta King is a winner of Perday Game result of Satta. Satta Basicaly coming from Satta matka. in the present time satta many type

such as cricket, Kabaddi, Hocky, stock market and other sector.

Satta King is a game played for centuries, which has been playing its part in destroy people's homes. Satta is totally restricted in India.

Satta has been prohibit in India since 1947. The Sattebaaj who play Satta have found a new way to avoid this Now. Satta King has Totally changed in today's Now.

Today this gamble is played online. This online Satta is played like SattaKing or Satta.

Nice Post Your content is very inspiring and appriciating I really like it please visit my site

quote status

SMS bomber

check out this website young soch for some amazing business ideas and success story and

also visit this site movierulzz to download latest movies

this website more about physiotherapy will help you to learn physiotheraphy concept

Chart is a kind of record which can be any old. The Satta King chart itself is a record of Matka. Play Bazaar Here we will know why the chart of any matka is made and what is the use of seeing it. If you want to achieve success in Matka, then you must have information about the chart.

Wonderful content as always, this is very informative and interesting

google adwords course in Tamil

Great Web page, Stick to the good job. Thanks a ton.

satta batta

This is an excellent post I seen thanks to share it. It is really what I wanted to see hope in future you will continue for sharing such a excellent post.

data science training in malaysia

Thanks for sharing this blog its very helpful to implement in our work

Learn Japanese Language | Learn Japanese Online

I just now discovered your blog post India matka guessing

As a trusted partner with technology industry leaders, DataDukan offers state-of-the-art Business Intelligence & Analytics solutions which cater to both the business and IT infrastructure needs of

our clients. Our certified consultants strategically build a BI roadmap by deploying solutions which include platform testing and rolling them out.

Best Business Analytics Agencies in Mumbai leverages a tested methodology for Data Warehousing & Analytics Solutions which enables enterprises to generate an

enterprise-wide central repository for their critical data and allows the KPI to be in alignment with their business needs.Our data visualization experts are trained in the deployment of data visualization tools

such as Power BI, SSRS, Tableau, etc. Data Dukan AI Platform for the enterprise blends AI, technology, data and analytics to serve as the intelligence layer for forward-thinking companies.

Satta King (सट्टा किंग) is a kind of lottery game based on numbers from 00 to 99 which comes under "Gambling". This game's real name is Satta Matka. " ...

How I Prepare For Running A satta company Campaign?

bhootnath matka

सिंगल लीक जोड़ी में होगा धमाका Satta King

गली दिसावर फरीदबाद गाजियाबाद का मिलेगा बिल्कुल डेट फिक्स डायरेक्ट Satta कंपनी से लीक सभी अपना लॉस कवर कर लो ऐसा मौका हाथ से जाने ना दो तो देर किस बात की जल्दी कांटेक्ट करें

नोट- आज की सिंगल जोड़ी लेने के लिए तुरंत बुकिंग करे राजेंदर CEO के पास - 7830239831

Your way of explaining the whole thing in this paragraph is really pleasant, everyone can know it easily.

satta matka

I things and data online that you might not have heard before on the web.

Hi, I found your site by means of Google

indeed, even as searching for a comparative matter, your site arrived up, it is by all accounts incredible.

bhai aapke liye hai. lagao or jeeto.I have bookmarked it in my google bookmarks.

game is drawing and guisses based generally match-up,

anyway currentlyit's arranged in best, and satta lord desawar is presently horribly eminent

furthermore, to a great extent participating in game across the globe people ar insane with respect to this game.

Yet, as of now the principal essential factor is that this game is satta king neglected to keep the law and

decide guideline that to keep the conventions and rule. Presently right now people need to depend on it,

on the off chance that the game doesn't follow the conventions they need not play the games anyway people are still

partaking in the game,they play the games on the QT people have answer on it to quit participating

in this kind of games, consistently help work and worked with individuals that might want facilitated,do something for

your country do perpetually reasonable thing and be everlastingly happy.satta king

Much obliged to you for visiting Our Website sattaking,Most most likely similar to our visitor from Google search.Maybe you are

visting here to become more acquainted with about gali satta number today.to know gali disawar ka satta number please visting

your landing page of site and look down . You will see boxed sorts data which is show satta number

of particular game. There you will likewise see number of today yesterday satta number of, for example, gali disawar, new

mumbai, disawar gold and loads of game you have wagered on the game.If you play your own gali disawar satta game and

need us to put your own board on your website.Please satta king

get in touch with us on showed number which you will discover in footer part of website.Apna game dalwane k liye hamse

contact kre on google pay,phonepe, paytm jaise aap chahe pehle installment karen. aapka board moment site pr update

kr diya jayega jaisi hey aapka installment done hota haiWe greet you wholeheartedly and exceptionally pleased to have you our

website.satta kingPlease bookmark our site and stay tuned and refreshed to know.

you might have perceived the cycle to play disawar satta gali game and caught wind of fix spill jodi disawar gali from

your companions, family members. Actaully individuals favors disawar gali games as It is exceptionally well known in Indian subcontinent.

also, is considered illegal.by having appended with our site .satta kingYou

will discover magnificient content in regards to all the games.satta king

Thanks for sharing this information. I really like your blog post very much. You have really shared a informative and interesting blog post with people..

full stack web development course

Get the fastest result Online Satta King, Satta Matka, Delhi Satta, Satta, Sattaking, Gali Satta. Sattaking.agency is most reliable and convenient sattaking.

Delhi Bazar Satta

Delhi Bazar Satta King

Rs organisation is one of the best seo company in Noida For more information please call us +91 84481 44130

To begin troubleshooting, try logging into your Gmail account on gmail.com. If that doesn't solve the Gmail Keeps Crashing problem on your iPhone, you can proceed to solve the software-related issue. It's best to quit the application and restart it because apps that weren't closed or left suspended while an update was being installed usually crash or act up. As a second solution, restart your phone as it will also clear any errant apps and dump any corrupted data, including those that caused Gmail to fail to function. The third solution is to update your iPhone's Gmail app because applications that aren't updated will usually be the first to experience issues with updates.

Thanks designed for sharing such a fastidious thought, piece of writing is nice, that's why i have read it fully. Thank Bro To read.

madhur bazar

Excellent effort to make this blog more wonderful and attractive.

cyber security course in malaysia

Hi, Thanks for sharing nice articles...

Chartered Accountant in India

Hello, yup this article is genuinely nice and I have learned lot of things from it about blogging. thanks.

play bazaar

sattaking welcome to our blog that presents a very complete information is Tech Phone ok now we will discuss the information you are seeking, may I guess if you are looking for information on 4 Steps Hacking Jcow Social Networking Web Server via Arbitrary Code Execution if properly come please continue reading until the end, we also provide other information, do not forget to get around:

This is very creative and informative, thanks for such a great work. Checked out here for your exams past questions @ www.pastquestion.com.ng

Thanks for provide great informatic and looking beautiful blog, really nice required information & the things i never imagined and i would request, wright more blog and blog post like that for us. Thanks you once agian math tutoring

I really happy found this website eventually. Really informative and inoperative, Thanks for the post and effort! Please keep sharing more such blog.

gemini login

bitcoin login

bitcoin login

Way cool, some valid points! I appreciate you making this article available, the rest of the site is also high quality. Have a fun

black satta

Thank you very much for this great post. Daniel Craig Skyfall Peacoat

visit this link for information about Data Science

https://etlhive.com/data-science-course-in-pune

thanks for sharing this is a very great article please also check this site isjust good to consisder

shar pei for sale

Wooden Dining Chairs

Wooden dining chairs are designed to pair with any dining table. Whatever style you pick, we assure you of the finest-quality, craftsmanship & design.

The list of wooden dining chairs at Only dining Chairs is long and composed of thrifty products that can simplify your thinking space.

"Very Nice Blog. I hope to see more posts like this. Thanks. AC Repair in Delhi

OWS repair service "

Obtain COVID-19 vaccination card without taking vaccination.

Get a registered vaccination card/certificate/passport. 🅰

With our cards/ Certificates you can do the following:

● Travel

● Work

Our cards are:

● Database Registered

● Checked and Verified.

Get your cards now and keep your DNA unchanged.

Contact us

Home Page... https://t.me/FerdrickManasaAntiCovid

Thanks for sharing such a great information with us.Keep on updating us with these types of blogs.

satta king

Satta king up 2021

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

irhyt.com

Thanks for sharing this valuable article we are glad to read this informative article we also write blogs on instagram video download or any downloader related website.

BIIT(Brahmanand Institute Of Information & Technology) is one of the very well known Computer classes in east delhi. BIIT New Delhi computer coaching contains the highly professional trainees. You can call us on 9311441524 or can visit to our address A-115 , First Floor , Near Panna Sweet, Shakarpur, Vikas Marg, Laxmi Nagar, Computer Institute, Opposite Metro Piller No. 33, Delhi, 110092 or can directly visit to our official site http://www.biitnewdelhi.com/.

Excellent blog! Do you have any helpful hints for aspiring writers?

madhur satta matka

Really amazing work keep doing and keep spreading the information , like your post . regards...

black satta

Sarkari Result www.sarkariresult.care

Thanks for the useful information

union roofing, union roofers, oakland roofing, oakland roofers

Thanks for sharing this information. I really Like Very Much.

Blockchain online training

Nice Blog. Thanks for sharing with us. Such amazing give information.

satta matka

Here's an excellent weblog that you might find interesting that we encourage you to visit.

black satta

Find the reasons Spectrum email isn't responding

These points can be referenced if Spectrum email is not working on your device.

1. Spectrum Email Stopped Working on iPhone

If you're using an iPhone and discover that Spectrum Email is not working once more, make sure that your email settings are set up correctly. You'll need to enter your username and password for the Spectrum Email account on iPhone.

2. Spectrum Email Issues on iPad

In case you are using an iPad to open Spectrum email; however, have a problem, you should consider rechecking the port number used for your email settings. This can resolve the Spectrum email issue.

3. Spectrum Email not responding to Android

Android users are often faced with issues when using Spectrum Email on the device. This article will explain how to manually set up your email account by using IMAP settings.

4. Spectrum Email Stopped Working on Outlook

To determine the Spectrum Email issues on Outlook requires you to use the POP protocol for the type of account you have. The mail server that you are able to connect to is set to pop.charter.net and the outgoing mail server as smtp.charter.net.

5. Spectrum Email is not responding to Windows 10

The most frequently reported Spectrum Email issues for Windows 10 users are because of of the wrong username and password for the Spectrum account. If you've lost your login credentials you can make them more secure by following a few simple ways. The same strategies are beneficial when you encounter Spectrum email issues with Windows 7.

6. Spectrum Email Not Responding after Changing Name

There aren't any easy fixes However, you may consult your Internet Service Provider (ISP) for any issues with servers. Most of the time, these issues are resolved by themselves.

7. Spectrum Email stopped working after changing the password

You might have recently altered the password for your Spectrum Email password. But, it could indicate that Spectrum Email is not working ensure that you're using the authentic login credentials. You can change your password by making use of the Spectrum Email sign-in assistant.

8. Spectrum Email Not Working after Changing Account

To resolve the issue that is related to Spectrum Email after you change the account- close the browser you're using to sign-in to the Spectrum Email account. Make sure that you have the right email settings. Then, you can prelaunch Spectrum Mail.

Read the solution: https://www.gethumanhelp.com/spectrum-email-not-working-iphone-mac-ipad-windows-10/

I am sure this post has touched all the internet people, it's really really pleasant article on building up new website.

black satta

I read this piece of writing fully on the topic of the comparison of hottest and earlier technologies.

satta king

Kalyan matka is the olest form of matka gaming started by Kalyan Ji Bhagat early in the 1960. Previously the Kalyan Matka game was started and played on...सट्टा मटका

Just added this blog to my favorites. I enjoy reading your blog and hope you keep them coming!

play bazaar

Asus Router Default Password

ASUS has introduced a broad selection of wireless routers to its customers across the world. They are suitable for a variety of uses in the work or at home. ASUS router is a good choice regardless of workplace environment and requirements. ASUS Wireless Router uses a web-based user interface which allows users to set up the router with any internet browser, like Internet Explorer, Mozilla Firefox, Apple Safari, or Google Chrome. Based on your network configuration requirements, you are able to opt for any of the router brands. ASUS routers are extremely reliable and have an user-friendly interface. Users are able to easily comprehend and operate their router's console and settings using a simple and minimal technical understanding. Asus is among the top choices in Virtual Private Network (VPN) routers. The majority of ASUS routers are equipped with an integrated OpenVPN client, which makes setting up simple. If you're a new user of an ASUS router, you have to be aware of the process of logging in. In this post, we'll explain how to locate and modify the default password for Asus default username and password for the router.

ASUS has introduced a broad selection of wireless routers to its customers across the world. They are suitable for a variety of uses in the work or at home. ASUS router is a good choice regardless of workplace environment and requirements. ASUS Wireless Router uses a web-based user interface which allows users to set up the router with any internet browser, like Internet Explorer, Mozilla Firefox, Apple Safari, or Google Chrome. Based on your network configuration requirements, you are able to opt for any of the router brands. ASUS routers are extremely reliable and have an user-friendly interface. Users are able to easily comprehend and operate their router's console and settings using a simple and minimal technical understanding. Asus is among the top choices in Virtual Private Network (VPN) routers. The majority of ASUS routers are equipped with an integrated OpenVPN client, which makes setting up simple. If you're a new user of an ASUS router, you have to be aware of the process of logging in. In this post, we'll explain how to locate and modify the default password for Asus default username and password for the router.

HP Printers are a remarkable and attractive feature, which may explain its increasing popularity. However, HP Printer Password can have a few problems. This blog can help you get the answers you need. Follow these steps to determine the default HP Printer passcode. You can read the steps below to find out more.

Read More: https://www.gethumanhelp.com/how-to-find-and-reset-hp-printer-default-username-password/

doja dispensary

doja weed

doja

doja dispensary michigan

doja dispensary kalamazoo

doja exclusive

doja exclusive weed

doja exclusive rs11

doja exclusive strains

doja exclusive gelonade strain

doja exclusive delivery

Thanks for your personal marvelous posting! i really enjoyed reading This, you can be a great writer. I will always bookmark your blog and definitely come back sometime

satta king

Thanks for describing your knowledge to all of us. You write the correct information. Keep posting such information. Satta Matka is one of the best casino Matka games tips websites. Visit now.

Post a Comment